The swift proliferation of artificial intelligence (AI), especially generative AI systems, has transformed the epistemic framework of scientific writing. In their significant investigation published in JAMA (Vol. 335, No. 8), Isamme AlFayyad, Maurice P. Zeegers, Lex Bouter, and colleagues investigate a critical governance issue in current scholarship: the frequency with which academics declare the use of AI in paper submissions. Their study, “Self-Disclosed Use of AI in Research Submissions to BMJ Journals,” examines 25,114 research submissions submitted to 49 journals within the BMJ Group from April to November 2024, subsequent to the introduction of compulsory AI disclosure inquiries.

This study constitutes one of the initial extensive, empirical datasets investigating AI transparency in scientific publishing. It goes beyond self-reported studies, which said that AI was used by 28% to 76% of people, to look at how people really disclosed information when they submitted it.

Prevalence: A Gap in Disclosure

Out of 25,114 valid submissions, 1,431 manuscripts (5.7%) revealed the usage of AI. This number is much lower than what surveys say it should be. The statistics correspond with a comparable study examining over 100,000 papers submitted to JAMA and JAMA Network publications, which indicated a disclosure rate of 3.3%. These results show that there is a big gap in transparency.

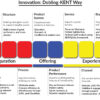

The uploaded regression table shows that the odds ratios were changed to take into account six things: the journal’s acceptance rate, the Impact Factor, the peer review model, the journal’s specialization, the region of the submitting author, and the number of authors. The statistical rigor—binomial logistic regression with modified ORs—boosts trust in the claimed links.

Chatbots (56.7%) were the most common tools that people talked about, with ChatGPT being the most popular. Writing helpers (12.7%), mostly Grammarly, were the second most common. Notably, 31.4% of submissions that included AI did not say which tool was utilized, which could mean that the reporting was unclear or not thorough.

AI as a tool to help you write better

A significant 87.2% of authors who acknowledged the utilization of AI indicated that it was utilized to “enhance the quality of writing.” This suggests that AI presently operates more as a linguistic enhancement system rather than as a data-analytical or hypothesis-generating tool in published biomedical research.

This aligns with the design capabilities of large language models from a technological analysis standpoint. Generative AI is great at jobs like paraphrasing, summarizing, fixing grammar, and making sure that the structure of a text is clear. These activities make it easier for people to think, but they may not have a direct effect on how research is done. As A. P. J. Abdul Kalam, a former president of India, said, “Excellence is a continuous process and not an accident.” In this case, AI is being used as a tool to improve the process instead of taking the place of human work.

Fei-Fei Li, an AI researcher, has said that “AI is not a substitute for human intelligence; it is a tool to amplify human creativity” around the world. The BMJ data show that amplification, especially linguistic amplification, is the most common use case right now.

Regional Differences: Culture, Access, or Policy?

The regression analysis showed that authors from South America (OR 1.75; 95% CI 1.22–2.49) and Europe (OR 1.28; 95% CI 1.14–1.45) were far more likely to say they used AI than authors from Asia. There were no statistically significant differences found between The BMJ and other BMJ journals or between journal specialties.

These regional patterns call for a more in-depth analysis. Changes may show:

• Variations in the frameworks for governing AI in institutions:

• Differences in how easy it is to get to generative AI tools

• Cultural standards about being open and honest

• Clear communication in the editorial

India, for example, has seen a huge rise in the use of AI in health technology and academics. But formal disclosure standards are still changing at different institutions. India is on track to become a worldwide hotspot for AI talent, as NASSCOM surveys have pointed out. However, regulations are still not in sync. The BMJ statistics may show how policies are changing right now.

Underreporting and Unclear Ethics

The authors’ main point is a depressing one: disclosure rates probably don’t show how much AI is really being used. Some such reasons are:

1. Intentional omission.

2. Not knowing what “AI use” means.

3. Not enough people know about AI’s role as a coauthor.

This fits with other worries about how hard it is to understand AI in scientific work. If writers define “AI use” too narrowly (for example, by not including grammatical correction tools), the mandatory disclosure questions the BMJ Group has put in place may not work very well.

It’s still hard to find things with technology. AI-generated text watermarking is still in its early stages, stylometric detection methods give false positives, and hybrid human-AI writing makes things less clear. So, policy tools need to change as detection tools do.

Consequences for Academic Integrity

From the perspective of academic governance, the results highlight three essential requirements:

1. Define Terms: Journals need to say if grammar correction, translation, concept generation, or data analysis count as reportable AI use.

2. Standardize Disclosure Taxonomies: The function-based taxonomy utilized in this study is a model that can be used again.

3. Invest in Education: Researchers need systematic training on how to use AI responsibly.

For medical schools, especially in developing countries, this is not just a problem with writing, but also a problem with acquiring skills. AI literacy should be included in the curricula for research methodology.

A Time of Change in Scientific Publishing

AlFayyad et al.’s study gives us an early look at how scholarly communication is really happening on the ground. The increase in disclosure from 4.5% in April to 7.3% in October indicates that the normalization of AI transparency may already be under progress.

The overall rate of 5.7%, which is much lower than what the poll predicted, implies that scientific publishing is at a turning point. As generative AI becomes more common, the rules for being open and honest must also change.

It’s important to recognize the work that JAMA and the BMJ Group did to publish and put this disclosure strategy into action. They are building the moral framework for future scholarship by making AI transparency a part of submission systems.

Rabindranath Tagore, an Indian philosopher, said, “Faith is the bird that feels the light and sings when the dawn is still dark.” Today, scientific publishing is at a new beginning. Transparency in the use of AI should not be an exception, but an ethical standard.

Dr. Prahlada N.B

MBBS (JJMMC), MS (PGIMER, Chandigarh).

MBA in Healthcare & Hospital Management (BITS, Pilani),

Postgraduate Certificate in Technology Leadership and Innovation (MIT, USA)

Executive Programme in Strategic Management (IIM, Lucknow)

Senior Management Programme in Healthcare Management (IIM, Kozhikode)

Advanced Certificate in AI for Digital Health and Imaging Program (IISc, Bengaluru).

Senior Professor and former Head,

Department of ENT-Head & Neck Surgery, Skull Base Surgery, Cochlear Implant Surgery.

Basaveshwara Medical College & Hospital, Chitradurga, Karnataka, India.

My Vision: I don’t want to be a genius. I want to be a person with a bundle of experience.

My Mission: Help others achieve their life’s objectives in my presence or absence!

Leave a reply

Leave a reply