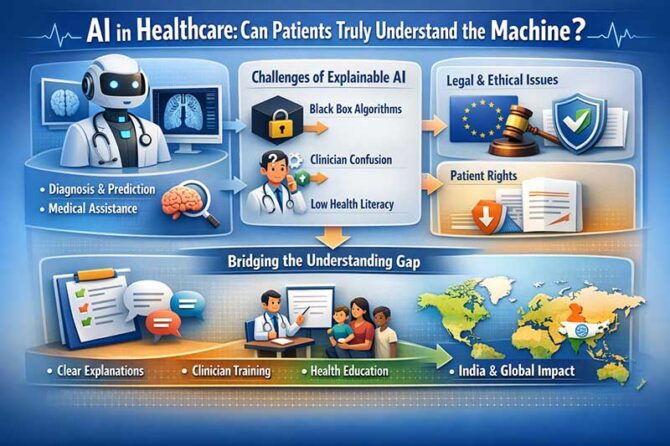

The rapid incorporation of artificial intelligence (AI) in the healthcare industry has created a new ethical and technological imperative: the “right to understand.” This issue has been emphasized in a paper written by Anshu Ankolekar in The Right to Understand in Health Care AI (Journal of Medical Internet Research, 2026). The European Union’s AI Act (2024), along with the General Data Protection Regulation (GDPR), has started to formalize this new right for patients to understand the decisions made by AI in healthcare . However, while the framework for this new right is being developed, the ability for patients to understand the decisions made by AI remains a problem, creating a challenge for healthcare practitioners and policymakers around the world, including in India.

AI in healthcare has the ability to revolutionize the industry with many benefits. From image interpretation to predictive analytics for intensive care unit patients, AI has shown a high degree of accuracy in its decisions, often surpassing human capabilities. For example, AI’s ability to detect malignancies in images using a type of artificial intelligence known as deep learning has shown a high degree of sensitivity in detecting cancers in their earliest stages. The World Health Organization (WHO) has emphasized that AI has the ability to transform health systems around the world with its ability to improve diagnoses, treatments, and access to care, especially in developing regions such as rural India.

However, these advantages come with a critical trade-off: opacity. High-performing AI systems function as ‘black boxes,’ where outputs are produced by complex interactions that even developers do not fully comprehend . Cynthia Rudin (2019) has argued that relying on these opaque systems in critical domains like health is problematic and advocated for interpretable models. However, there is a trade-off between model interpretability and diagnostic accuracy, as discussed by Ankolekar.

From a clinical point of view, these issues present critical challenges. Physicians are frequently presented with confidence scores and recommendations produced by AI without a clear rationale for these recommendations. This puts them in an uncomfortable position of having to explain these decisions, where they themselves do not comprehend these decisions. Time constraints also affect these situations. Studies on human-computer interaction and clinical practice have shown that physicians struggle to find an appropriate balance between communication and time efficiency. Adding AI to this situation creates an additional cognitive and communicative challenge. Moreover, automation bias—where physicians over-rely on AI recommendations—results in diagnostic errors. This has been shown in radiology studies where errors in AI recommendations led to errors in human judgment .

The patient side of this situation creates an additional layer of complexity. Health literacy is a global health challenge. Between 22% and 58% of European citizens experience difficulties in understanding health-related information . Similar and even greater disparities exist in India due to linguistic diversity and educational and digital divides . Gigerenzer and Edwards (2003) have shown in their research how even educated people struggle with probabilistic reasoning. This makes statistical outputs of AI difficult to comprehend. Over-explanation of these results may actually lead to a lack of understanding and cause patients to defer to the doctor’s authority.

This disconnect between legal rights and usability requires a paradigm shift, moving beyond compliance to meaningful communication. As Ankolekar points out, the question is not whether the explanation is given, but whether it is useful to the patient . The key to effective explanations lies in the importance of decision relevance, rather than algorithmic relevance. For instance, rather than explaining the architecture of the neural network, the clinician might explain to the patient what the AI recommends, the level of confidence, possible uncertainties, and alternatives that are available. This follows the guidelines of the Elwyn et al. (2012) model of shared decision-making.

From the perspective of the Indian subcontinent, the issue of explanation of AI in healthcare is of critical importance. The rapid uptake of AI in telemedicine, outsourcing of radiology, and digital diagnostics means that the issue of ensuring that the poor are not marginalized by this technology is of critical importance to avoiding the specter of “algorithmic inequality.” As Dr. Soumya Swaminathan, the former Chief Scientist at the WHO, has said, “Technology must reduce inequity, not widen it.”

The path forward needs to be a collaborative effort from all the stakeholders involved. Developers need to design and test their explanations for the patients instead of just focusing on the regulations. Healthcare facilities need to invest in training for the clinicians and provide them the necessary time to discuss the topic of AI. Policymakers, especially from countries like India, need to incorporate digital health literacy for the public and develop specific guidelines for the use of AI.

Thus, the right to understand AI in healthcare is a crucial point where the three factors—technology, ethics, and autonomy—come together. Although the EU AI Act is a great starting point for the regulations around the use of AI in healthcare, the challenge now is to implement this right effectively. As Eric Topol puts it so elegantly in *Deep Medicine*, “The real promise of AI is not to replace doctors, but to give them back the time and insight to be better doctors.” In fact, the ability for the patient to understand the AI decision is the key to the success of this promise—not only for the world but for India as well.

Dr. Prahlada N.B

MBBS (JJMMC), MS (PGIMER, Chandigarh).

MBA in Healthcare & Hospital Management (BITS, Pilani),

Postgraduate Certificate in Technology Leadership and Innovation (MIT, USA)

Executive Programme in Strategic Management (IIM, Lucknow)

Senior Management Programme in Healthcare Management (IIM, Kozhikode)

Advanced Certificate in AI for Digital Health and Imaging Program (IISc, Bengaluru).

Senior Professor and former Head,

Department of ENT-Head & Neck Surgery, Skull Base Surgery, Cochlear Implant Surgery.

Basaveshwara Medical College & Hospital, Chitradurga, Karnataka, India.

My Vision: I don’t want to be a genius. I want to be a person with a bundle of experience.

My Mission: Help others achieve their life’s objectives in my presence or absence!

My Values: Creating value for others.

References:

Ankolekar A. The Right to Understand in Health Care AI. J Med Internet Res. 2026;28:e95090.

Rudin C. Stop explaining black box machine learning models. Nat Mach Intell. 2019.

Gigerenzer G, Edwards A. Simple tools for understanding risks. BMJ. 2003.

Elwyn G et al. Shared decision making: a model for clinical practice. J Gen Intern Med. 2012.

WHO. Ethics and Governance of Artificial Intelligence for Health. 2021.

Great article! The discussion about patients’ ability to understand AI decisions is very relevant. As AI continues to grow in healthcare, improving communication and health literacy will be essential to ensure better patient outcomes. Really informative read.

Reply