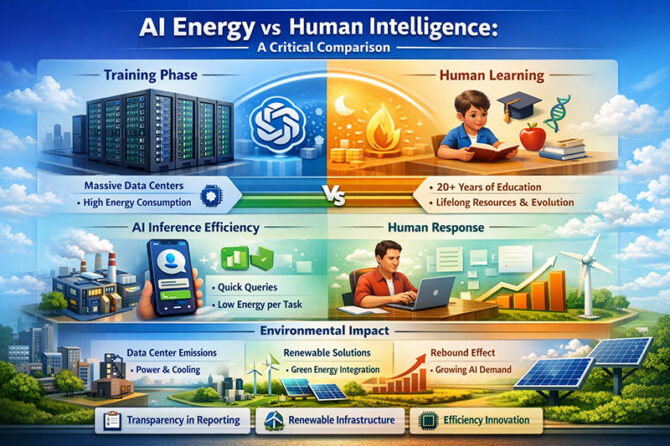

One of the most major technical arguments of the past 10 years has been about the environmental cost of AI. Concerns about data centers using too much electricity, water for cooling, and carbon emissions have grown as generative models have gotten bigger and more popular. Sam Altman made a controversial comparison when asked about the environmental impact of AI: training an AI model is not the same as having one person answer a question. He said that before saying that AI is inefficient, one needs look at the full “training cost” of a person, which includes decades of education, food, and even evolutionary history.

This comparison is courageous, makes you think, and is up for debate in terms of technology.

Training large language models requires a lot of computing power. In 2019, Emma Strubell and her team did a groundbreaking study that showed that training a huge NLP model might release as much carbon as five cars over their lifespan (Strubell et al., ACL 2019). OpenAI, Google, and Anthropic make newer frontier models that are trained on clusters with thousands of GPUs that often run nonstop for weeks.

According to the International Energy Agency (IEA, 2023), data centers use roughly 1–1.5% of all the electricity used in the globe. Experts suggest that this share could climb a lot by 2030 as AI workloads grow. Water utilization is another thing to think about. For instance, cooling down massive data centers may use millions of liters of water a year.

From an Indian point of view, the effects on the environment are quite important. India’s digital economy is rising swiftly, thanks to major cloud investments in Chennai, Hyderabad, and Mumbai. It needs to figure out how to employ renewable energy and AI at the same time. The government is moving forward with its National AI Strategy. To avoid becoming too dependent on coal-heavy grids, it will be necessary to build infrastructure that lasts.

The “Human Training” Analogy: Is it Right or Wrong?

Instead of comparing AI training to one person answering a question, Altman compares it to the overall cost of developing human intelligence. This transforms the issue.

At first sight, the comparison seems strong. People need food, medical care, schools, and roads for decades. Schools, transportation, energy, textbooks, and digital devices are just a few of the things that cost a lot of money at schools all around the world. Billions of individuals have worked together to improve science over time.

Critics, on the other hand, believe that this parallel could mix up biological and digital systems in ways that make it hard to hold people responsible. A person’s environmental imprint allows them to do a lot more than just answer questions; it lets them be creative, kind, and govern. AI training, on the other hand, is particularly concentrated on a few tasks.

Dr. Kate Crawford, who wrote Atlas of AI, says that “AI systems are not abstract intelligence; they are material systems that need energy, water, and labour.” Because of this, we can’t keep track of the environmental ledger using evolutionary analogies anymore.

But Altman’s idea about how to make inferences more efficient is worth thinking about. After training, AI systems can answer millions of questions with relatively little energy each question. This might be like or even less than the mental labour that computers help people accomplish.

A more relevant comparison could be how much energy each task uses. If a professional uses a laptop to do research and write a response for 10 minutes (which takes 50 to 100 watts), the total energy spent might be the same as or more than the watt-seconds needed for an ideal AI inference running in a hyperscale data center powered by renewable energy.

A 2023 study from the University of Massachusetts Amherst found that training consumes a lot of energy, but inference at scale can be very efficient, especially when using specialized hardware like TPUs and better cooling systems.

AI-driven automation in radiology triage, agricultural advice, or government grievance redressal in India might cut down on superfluous human effort and travel, which would indirectly lower emissions. For example, AI-assisted telemedicine platforms might make it less necessary for patients to travel, which is a huge problem in rural and semi-urban areas.

Microsoft and Google, among other companies, have promised to utilize electricity that is carbon-negative or carbon-free in their data centers around the world. If AI inference runs on infrastructure that gets its power from renewable sources, the extra cost to the environment for each query may keep coming down.

A Warning About the Rebound Effect

But the “rebound effect” happens a lot as efficiency is up. More and more individuals are using AI as it becomes cheaper and easier to access. All throughout the world, generative AI systems currently handle billions of cues every day. Even if each inquiry is quick, the overall demand may wipe out any gains.

In the 1800s, economist Jevons saw that making coal use more efficient actually made people use more coal, not less. AI might follow a similar route.

India’s increased use of smartphones and digital literacy could cause a big rise in the need for AI. So, legislators need to think about how each query affects the whole system, not just how well it works.

Advice: How to Use AI to Manage Energy Responsibly

From a governance point of view, these three rules are particularly important:

1. Reporting on energy use that is open and honest: AI companies should make available the same metrics for training and inference energy use as ESG reporting systems provide.

2. Infrastructure that uses renewable energy to work: It is crucial to get AI data centers to use solar, wind, or hydroelectricity, especially in places that are growing quickly, like India.

3. New ways to work smarter: Research on model compression, sparsity techniques, and edge inference that is still going on can greatly lower energy intensity.

India’s leadership in the G20 in digital public infrastructure affords us a chance to make “Green AI” a global standard. This would imply finding a balance between new ideas and taking care of the environment.

Conclusion: More than a Metaphor

Altman’s example urges a reassessment of reductive comparisons between AI and human cognition. It raises a very important point: intelligence, whether it’s organic or artificial, needs a lot of resources.

But being responsible for the environment means taking measurements that can be done right now. The relevant question is not whether AI represents the evolutionary cost to humanity, but if its marginal utility justifies its marginal emissions—and whether these emissions are decreasing with time.

As India and the rest of the world fast enter an age driven by AI, the goal is not to impede progress but to make sure that both technical and ecological intelligence grow.

“The Earth gives enough to meet everyone’s needs, but not everyone’s greed,” remarked Mahatma Gandhi. This could happen with artificial intelligence shortly.

Dr. Prahlada N.B

MBBS (JJMMC), MS (PGIMER, Chandigarh).

MBA in Healthcare & Hospital Management (BITS, Pilani),

Postgraduate Certificate in Technology Leadership and Innovation (MIT, USA)

Executive Programme in Strategic Management (IIM, Lucknow)

Senior Management Programme in Healthcare Management (IIM, Kozhikode)

Advanced Certificate in AI for Digital Health and Imaging Program (IISc, Bengaluru).

Senior Professor and former Head,

Department of ENT-Head & Neck Surgery, Skull Base Surgery, Cochlear Implant Surgery.

Basaveshwara Medical College & Hospital, Chitradurga, Karnataka, India.

My Vision: I don’t want to be a genius. I want to be a person with a bundle of experience.

My Mission: Help others achieve their life’s objectives in my presence or absence!

Leave a reply

Leave a reply